The Digital Marketplace helps government find and compare cloud services for their technology projects. I am the Service Manager for the Digital Marketplace and lead the multidisciplinary team tasked with its design and build.

All new or redesigned digital services being built in government need to meet the digital by default service standard. Services processing or likely to process over 100,000 transactions a year are assessed at GDS. Services with fewer than 100,000 transactions per year are internally assessed with the department self-certifying that they meet the standard.

Despite being a service that doesn’t have to be part of the formal service assessment process, we decided that it would be in the best long-term interest of the service to opt for an assessment.

Government isn’t building websites, it is building digital by default services to meet users’ needs. This standard makes up a crucial part of the DNA of those services that will help build services people can use.

The Digital Marketplace provides an important service to government buyers and it marks the next step in the process of opening up government to the wider supplier market.

The service standard as part of the everyday process

Having now been through an alpha and a beta assessment we thought we should share some of what we’ve learnt. You can read the full details of the service assessment report published on GOV.UK.

For those of you who don’t know, each service is assessed on 26 points. Very early in our project - at inception - we went through all the points as a team, and made sure we understood clearly what was required of us.

Running through these points early on helps keep the standard in mind from the outset. By doing this the standard then becomes inherent to the process and allows a team to concentrate on making a great service. It’s a useful exercise as the team is assembled from different places and has a varied level of experience of things like user-centred design or government security standards.

For example, when I joined, this was the first time anyone on the team had worked with user research. Going through the points on the service assessment helps to remind everyone on the team that each discipline matters, that accessibility can’t be added in at the end, and that user needs are balanced against security decisions rather than superseded by them.

Taking time to prepare

As a Service Manager, when I was preparing for the alpha assessment - my first assessment - I was advised that the assessment itself would be in the form of a discussion, not a presentation. I would be required to show and not tell; just come in prepared to show the thing and answer questions about the service.

The structure of the assessment is shared ahead of time so, as a team, you can get ready.

For both assessments I went through the questions with the core team. For the alpha assessment we also ran an additional session for everyone just to focus on things that we felt were important to highlight. There is room to tell the story of the things you, as a team, are proud of.

The assessment is conducted by a panel of subject-matter experts assembled from across GDS. There is a person who leads, and the subject matter experts ask questions relating to their area to see evidence of meeting the criteria. The meeting can last for up to 4 hours and will work to establish whether your service meets the criteria for the level you are putting yourself forward for.

Building a service vs passing an assessment

Each stage of the service assessment requires you to demonstrate a particular aspect of how you are delivering your service. For alpha, that means having a good understanding of what your service needs to do, and for beta it’s about having a service which has a good tech stack, ready to go live.

Our first beta assessment for Digital Marketplace was at the end of September, as we were confident that we knew what our service was doing to meet the criteria and how we were going to address the issues we considered to be outstanding. In this first assessment the panel advised us that they couldn’t pass us on 4 criteria and that we should return for a reassessment when those had been fully addressed.

One of the criteria we didn’t pass was to do with the lack of product analytics being monitored. While we have analytics technology in place from day one, the specific role was yet filled. The choice we made was to wait for the right person. Our new Product Analyst, Lana, has already made a great impact and we can now demonstrate improvements that we’ve made based on her first findings.

There are things we know we are good at

The service design has been truly informed by user research and analysis of user needs. The team gave excellent examples of this during the assessment by describing the research-prompted features of saved searches, and/or searches, the need for repeatability, and service descriptions.

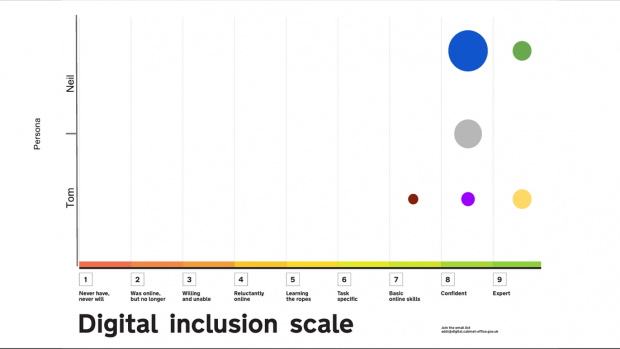

Every other time we see people as part of user research, we map where they sit on the Digital Inclusion scale. This allows us to have a really clear idea of who our users are beyond their level and knowledge of buying and selling. Our panel commented that this was the first time they’d seen this level of consideration.

Advice I would give

Buyers, service managers, builders of services: think about what it means to deliver to the service standard. Behaviours of agile delivery need to be embedded in your team and the way you think because you won’t be able to add them in at the end.

Don't forget:

- think about the criteria early

- service assessments are a team thing

- bring what you want to show off to the table

- prepare, prepare, prepare

- it’s not supposed to a chore, but a way to make your service great

Suppliers: whether you are providing the cloud services that the digital by default services are being built on, or the team that is helping to define, test, build, deliver the service, you need to understand the full requirement. If you have a cloud-based service that you think can help government departments with delivering a digital service, G-Cloud 6 is open for submissions.

Follow us on Twitter @GOVUKDigiMkt, and visit our blog for regular updates.