A few months ago I wrote about how we're using search analytics and SEO (or Search Engine Optimisation for all the non-robots out there) to make sure that people get the right answer quickly when they look for government information using search engines. Well, the SEO landscape has changed recently, and the work being done to make GOV.UK good from a user perspective also helps our content to rank well in external search results.

Disclosure – this post is very Google-centric. It’s not the only search engine out there, but it does account for about 90% of all UK searches. So optimising for Google is a good place to start.

Google loves us...

Google likes authoritative sites, and treats most government sites as trustworthy and important. In search results we're like the parent figure; we might lack the cool, enticing appeal of commercial sites, but we provide reliable help and advice when you really need it.

...but not unconditionally

But if we don't use the same language as our users, provide relevant services and information, or have other sites linking to us, Google won’t rank GOV.UK highly.

Sometimes if you search for a topic that government will have the definitive answer to, potentially misleading answers will appear higher than us – not a good look.

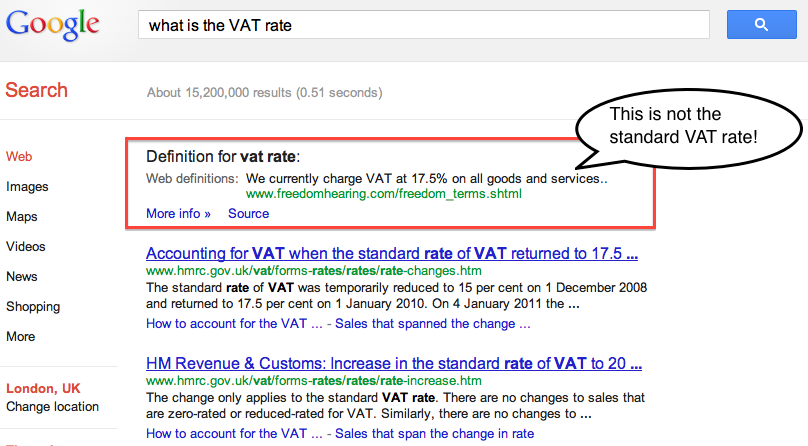

Take this example, a search for what is the VAT rate, and you'll see that the official answer is beneath an incorrect answer on another site.

This is the kind of thing we're trying to fix.

Google's been a-changing

Google recently sent panda and penguin out to DESTROY THE LINK FARMS. They wreaked havoc, and banished the nasty spammy links from the golden heights of Google results.

Maybe I should explain. Google has made updates to its algorithm (think of this as the scanner that determines the value of each web page) to weed out poor-quality websites that have used sneaky SEO practices to rank highly in search. These updates are known as Panda and Penguin. Google want to serve up good websites in their top results, and now they can do this more effectively.

We’ve worked hard to optimise

Panda and penguin may have got a lot of SEO practitioners hot under the collar, but they're good for GOV.UK. We're not trying to rank highly to sell sell sell. We care what people are looking for, not just what government wants to say. We've put a lot of effort into creating a great all-round user experience. This just so happens to fulfil Google's best practice requirements, which are that sites:

- are easy to use, navigate and understand

- contain actionable information

- are well-designed and browser-friendly

- contain high-quality, trustworthy content

We’ve also shaped our user needs with search data right from the beginning and done a lot of keyword research along the way to really get into the detail of what people want. Add to that the SEO best practices that help us to rank well in search, and also improve the user’s journey:

- titles, meta-descriptions and slugs that are short, keyword-filled, and descriptive

- focussed related links on each page, informed by user data and editorial expertise

- content that's not duplicated unnecessarily

But as with all things digital the proof will be in the numbers – we're gearing up to measure our effectiveness post-launch, and iterate, iterate, iterate.

17 comments

Comment by Ash young posted on

Google seem to be looking at testing the information boxes that are displayed at the top of searches. We've noticed this with a lot of clients that pop into and then out of these boxes almost on a daily basis.

It's interesting to see that large government sites have similar issues.

Comment by Jhon posted on

[...] about who will be doing the searching. Good SEO practice (as demonstrated by the nice people at GDS) has never been more important. [...]

Comment by Search Price posted on

Thanks for the article on .edu and .gov backlinks, interesting what Matt Cutts is now saying about Google testing turning off backlink relevance:

http://www.searchenginejournal.com/matt-cutts-explains-google-search-link-without-backlinks-ranking-tool/91495/

Comment by Web Design posted on

Really informative and engaging content.

Comment by SEO 24/7 UK (@SEO247UK) posted on

I found this an interesting post to read - as an SEO agency we know the value of having a link from a .gov domain website, but had never considered what it's like actually 'being' a .gov website! Definitely something for us to consider in the future.

It's also very interesting to note that Google are still reporting a VAT of 17.5%, with the same source as is cited in the image you've shown above. Despite all their recent updates for 'user experience' (Hummingbird, I'm looking at you) there are still some blind spots! It's hard to imagine a Google result being wrong, as so many people have an automatic response to a dispute - "let's Google it".

We've got a blog post brewing about the latest Google updates, if you're interested keep an eye on our blog: http://www.seo247.co.uk/article.html

Comment by The SEO Works (Sheffield) posted on

In our experience as an agency we have found in the past that it is much easier to rank stronger sites, by changing details such as the Meta Titles and Descriptions. Government sites are so strong which should make it easier to get to the top for chosen phrases.

I find it absolutely fascinating that Google got it wrong! As you mentioned above, if I was searching for this information I would probably take it at face value and not research it any further!

We also regularly blog about the latest Google updates and ranking factors: http://www.seoworks.co.uk/category/blog

Comment by Jason Baker posted on

Here is an Update Readers....

In 2013, Google is Planning to give its social love more importance rather than link love... So try to be more active on Social Media and Social Sharing websites....

Upto now there is no information update from Google on how they are treating .edu and .gov backlinks, but I am sure they are giving them value as these are the most valued and authority links...

Comment by Andrew Hughes posted on

Don't forget about Bing and Yahoo who are gaining more of the market share in UK searches and offering similar website facilities to include their equivalent of Google Places. Don't get left behand putting all your resources into just Google searches...

Comment by Keith D Mains posted on

Whilst it is fair to say one should not ignore Bing and Yahoo Andrew it is much more important to worry about ones Google search results what with Google having circa 90% of the search usage quota.

Now that said one also has to bear in mind there is very little difference in doing SEO for Google or Bing.

Note I say Google or Bing. This is because Yahoo has been using the Bing engine for sometime now and unless Marissa Mayer (Bogue) changes that this looks set to continue for a while yet. One would not get left behind by be being more concerned with ones Google results and treating ones Yahoo and Bing results in merely a cursory way. Though cursory may give a "hurried" intonation that should be seen from a time pespective.

I think Google's market share of search is still strong as people may have tried Bing but they have soon resorted back to Google after Bing's initial hype has died down. Bing results are far too slow to update and give the best results so people naturally turn back to Google.

Comment by SEO performance on launch: comparing GOV.UK with Directgov | Government Digital Service posted on

[...] search engine optimisation landscape is changing. As I have blogged previously, we are doing our best to make sure we use the same search terms as [...]

Comment by How is GOV.UK performing? | Government Digital Service posted on

[...] If the weekly GOV.UK visits and unique visitors end up being too low then we need to understand where people are getting lost and improve our redirects and search engine optimisation. [...]

Comment by Tom Blogs » There are no “regular results” on Google anymore. posted on

[...] about who will be doing the searching. Good SEO practice (as demonstrated by the nice people at GDS) has never been more important. [...]

Comment by Meeting the needs of businesses | Government Digital Service posted on

[...] people thinking about starting a business find their way to the Business Link website via search engines. They want to know what they need to think about before starting, what to do when they take the [...]

Comment by poultrykeeper.com (@poultrykeeper) posted on

As a webmaster, you used to have to be able to write html code. Then, with content management systems and editors appearing, more and more people could create websites. To rank well you could create authoritative content.

Nowadays, you are absolutely right, every webmaster has to learn about the importance of SEO and the impact it will have on your search engine position, however good your content is.

Some years ago, people would search the web and scroll through a number of pages to find the result they wanted. Nowadays people look at the first few results and if they don't see the result they want, they refine their search.

The result of this means that unless you are on the first page of the results then you see a huge decrease in traffic.

Comment by Alec Cochrane (@whencanistop) posted on

It sounds like you are doing some good work on the SEO front. I wrote a blog post about the SEO on the three old sites (which I guess the NHS.uk one is staying):

http://www.whencanistop.com/2011/09/seo-review-of-uk-government-websites.html

I guess number one on this list is that your domain name is not a .gov.uk domain name and you probably don't intend to change this at the moment. One of the biggest challenges you will face is that if departments insist on having identical copy on gov.uk and their own website, then it is likely that their own website will rank highest.

That said, looking at the numbers and iterating changes to make sure that you rank higher is the best way of doing SEO (too many people solely look at technical details, whereas putting in the phrases that people search for is far more effective!).

Good luck!

PS - you might want to put a 'noindex' on your printer friendly pages (but only if you don't repurpose the print page template for other reasons).

Comment by lanagibsongds posted on

Thanks for your comment. Your post has some interesting points, and you'll be pleased to know that we've done our best to make all our URLs friendly - short, snappy and easily shared. In response to your points, As department websites will gradually be merged into the Inside Government section of GOV.UK, duplicate content will not be an issue, but we can place canonical tags to deal with any duplication that might occur. In the meantime we hope that GOV.UK as a whole will gain the authority to rank well where appropriate.

Comment by Keith D Mains posted on

I noticed recently a top SEO expert ranking on page 1 of Google for a search for my own name after I had made a post on said SEO's blog.

I investigated this and found that this strong ranking was due to settings repeating my name on his site in cose proximity..

Now in the example above you point out a site ranking above the .Gov site with incorrect information.

Sadly it is this stength in repitition which gives such sites the egde.

This can be addressed however by careful and disceet use of repitition in ways that do not detract from the end use readability.

That repition being mainly taken care of by matching URL to page title and also on page heading and ensuring the words are in close proximity to the beginning of the first paragraph

Thus ensuring focus of "search term" to the "search query" to help those using said search term find the information.

The problem though I see facing a site like the Gov site is that it is quite likely many search phrases will be used to search for the same thing and it is impossible for the site to outrank all other sites all of the time.

How many different searches could there be to find the curent VAT rate for example. There are clealy many ways to search for this. I would say the site would have it's work cut out to try and find ways to cover such things.

There are a number of ways this can be done, but whether the Gov's sites would use said techniques or would have thought of them is another matter.