Today the Digital by Default Service Standard comes into full force. We first published the standard in April last year, along with the Service Design Manual, to give services time to ensure that they could meet the criteria.

We created the standard in response to Action 6 of the Government Digital Strategy. The standard has 26 different criteria that each new or redesigned service launched on GOV.UK must meet.

Each point of the criteria looks at different aspects of a digital service. These criteria are the building blocks for making a user-focused service that is safe and changes over time based on feedback.

The standard supports both the Cabinet Office spend controls and protects the integrity of GOV.UK itself.

Why it matters

The standard was developed over time, influenced by the way GDS was building GOV.UK. It’s important that services meet this standard so that users of GOV.UK can expect the same high quality and ease of use throughout the site, no matter which service they are accessing.

The standard is also important in leading change within government; making sure that all the skills and capabilities we need to make a successful service are part of the same team, and embedded within departments and agencies.

We’ve had positive feedback from service managers who found the assessments helpful in continuing to iterate their services:

We had an assessment last week, it really did feel collaborative with lots of knowledge sharing.

Of course, we’ll be continuing to iterate the process as well.

What has changed

In the last year we have run over 50 assessments, using the standard as the basis for deciding if a service is on track to be Digital by Default. These assessments have a focus on understanding user needs, security, privacy, and the capability to iterate a service quickly based on feedback.

We’ve shared what we’ve learnt, and have been working hard to make sure the standard is in the best shape to assess services against.

Although it has never affected the outcome of an assessment, some points of the standard seem to lack the clarity that we have come to expect. So, we are changing the wording of some points to be certain there is no doubt of their meaning. For example, point 13 originally stated:

“Build a service with the same look, feel and tone as GOV.UK, using the service manual.”

From today this will now be:

“Build a service consistent with the user experience of the rest of GOV.UK by using the design patterns and style guide.”

A small but important change that will help services and assessors alike.

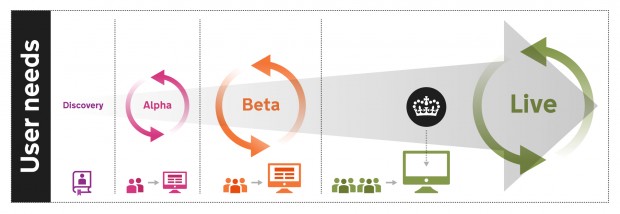

One other change is to clarify the stages of assessments. All services should expect to be assessed at least three times during the normal development cycles of alpha, beta and live. We will make sure that assessments happen at some point after an alpha is completed, before a service is launched on GOV.UK as a beta, and finally before a service goes ‘live’ and is certified as Digital by Default.

Let’s have some clarity

GDS is committed to working in the open - making decisions and reporting the work that is happening, like on this blog. Assessments are no different; we have been publishing the outcomes since the beginning of the year on the GDS data blog. This was just the start. The information so far has not included specific results on each point of the standard, so we are going to include these details with assessments completed from today.

Departments themselves will be assessing services with less than 100,000 transactions and we will soon be publishing details before they launch on GOV.UK. We know that by sharing this information we are all accountable for the results of an assessment, and we expect service managers will use this to better understand the assessment process.

I want to thank everyone at GDS and across government who have helped to make Digital by Default integral to how we build services; it’s going to be a busy year getting some 150 services through assessments.

Follow Tom on Twitter @drtommac, and don't forget to sign up for email alerts.

2 comments

Comment by Steven Boung posted on

Does the guidance explain when agile is *not* the right answer?

In the real world agile isn't always beneficial and GDS needs to make this clear - otherwise it'll dogmatically drive up unnecessary costs and complexity, and users will be left with the feeling that agile is a political stick to beat them with.

Comment by Tom Scott posted on

Discovery has the ability to ensure development of a new or redesigned service is actually needed. In some cases that would lead to an organisational or service change rather than developing a digital service, and so no coding is needed whatsoever.

Overwhelmingly agile works for developing services and as so GDS has not provided guidance for other development methods. It's not just GDS who think this (System Error Report). There may be some cases where developing in a non-agile way is preferred and if you are part of the service team please contact us.